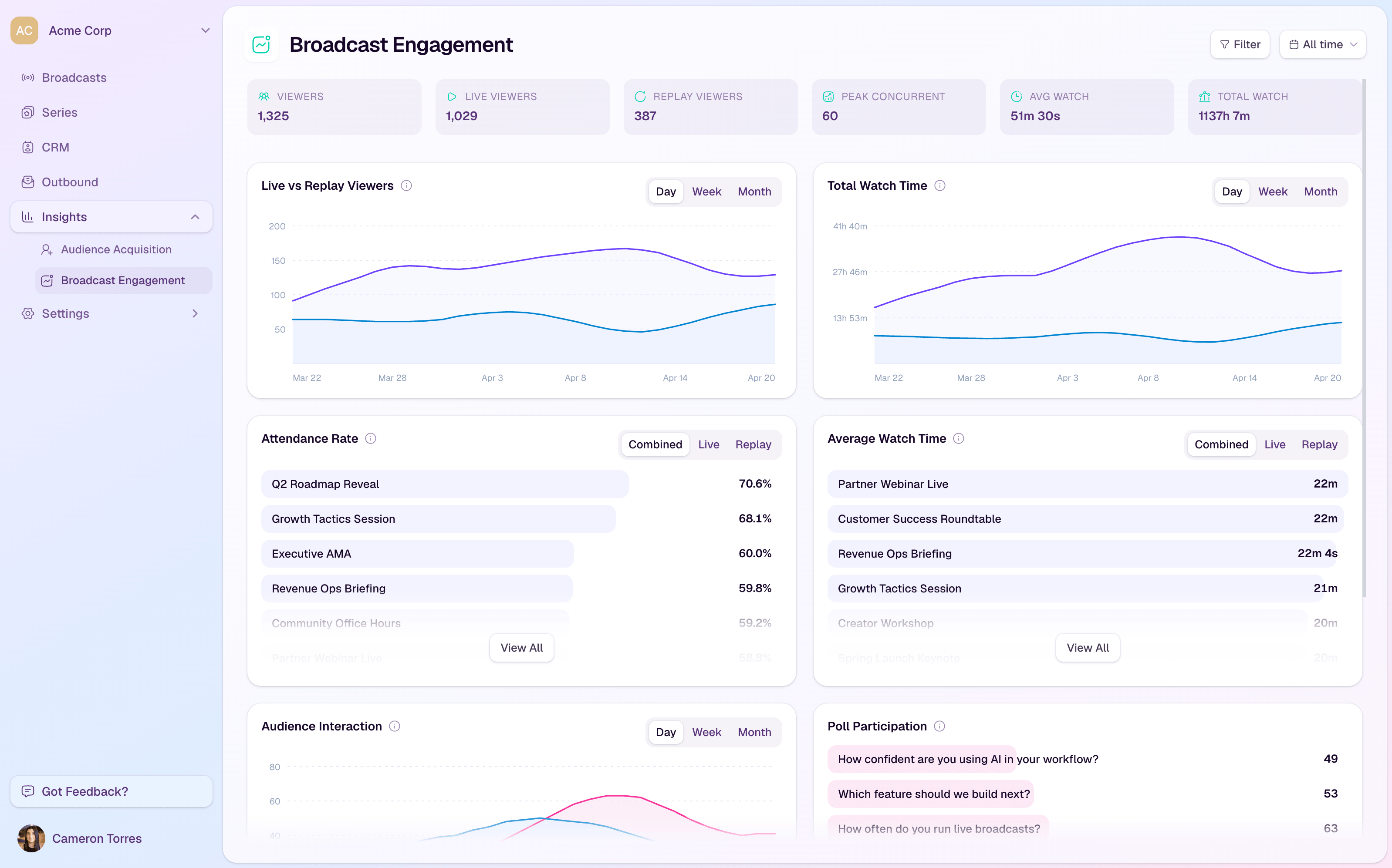

Webinar analytics are easy to collect and hard to use well.

A standard webinar report can show how many people registered, how many attended, and maybe how long the session ran. Those numbers are useful, but they do not answer the question that matters after a B2B webinar: what should we do next?

The best webinar engagement metrics help you understand intent. They show who paid attention, what they cared about, where interest dropped, which offers earned action, and how follow-up should change after the broadcast.

That is the difference between reporting webinar performance and using webinar analytics to improve the whole growth loop.

What Webinar Analytics Should Help You Decide

Webinar analytics should not be a long dashboard of numbers the team glances at once.

They should help you make decisions:

- which registrants deserve immediate follow-up

- which attendees should receive a softer nurture path

- which parts of the session held attention

- which CTAs matched the audience's intent

- which replay viewers showed renewed interest

- which topic, offer, or format should shape the next session

That decision lens matters because not every metric deserves the same weight. Registration volume can tell you whether the topic attracted interest. Attendance can show whether the audience made time for it. But questions, watch time, CTA clicks, replay behavior, and follow-up actions usually tell you more about what people actually did with that interest.

For B2B teams, the goal is not to crown one perfect webinar KPI. The goal is to build a practical view of audience intent.

The Engagement Metrics Worth Tracking

The right webinar metrics should cover the journey from registration through post-event action. Each one answers a different question.

| Metric | What It Helps You Understand |

|---|---|

| Registration-to-attendance rate | Whether the topic and reminder flow created enough live interest |

| Live attendance and watch time | Who showed up and how deeply they stayed with the session |

| Drop-off points | Where the session lost attention or where viewers got what they needed |

| Questions, chat, and poll responses | Which themes created active participation |

| CTA views and clicks | Which offer moved attention into action |

| Replay views and replay watch time | Who came back later or engaged after missing the live session |

| Follow-up actions | Whether the webinar created a useful next step after the event |

None of these metrics is complete on its own. A long watch time without a CTA click may show interest, but not urgency. A CTA click may show intent, but the meaning depends on the offer. A question may indicate buying interest, education interest, or confusion.

The value comes from reading the signals together.

Registration and Attendance Show Topic Pull

Registration volume is the earliest signal that a topic has market pull.

It tells you whether the promise of the webinar was interesting enough for someone to exchange their details and reserve time. But registration by itself is still only a light signal. People register for webinars they never attend, forward invites to teammates, or sign up because the topic is relevant but not urgent.

That is why attendance matters as the second layer. If many people register but few attend, the topic may be too broad, the reminder flow may be weak, or the audience may not feel the live session is worth prioritizing.

The useful question is not simply "was attendance good?" It is "what does the gap between registration and attendance tell us about the audience's urgency?"

If the gap is large, the next move may be better reminder messaging, a sharper title, a shorter format, or stronger reasons to attend live rather than wait for the replay.

Watch Time and Drop-Off Show Depth of Interest

Attendance tells you who entered the room. Watch time tells you who stayed.

That distinction is important. A viewer who joins for five minutes and leaves should not receive the same follow-up as someone who watches most of the session, returns for the replay, or stays through the final CTA.

Watch time helps you understand depth of interest at the contact level. Drop-off points help you understand where the session lost attention at the content level.

Both are useful.

At the audience level, drop-off can show where the format dragged, where the intro ran long, or where a section failed to match the promise of the webinar. At the contact level, watch time can help shape follow-up. Someone who watched deeply may be ready for a practical next step. Someone who left early may need a short recap, a timestamped section, or a different resource.

This is where analytics and insights become more useful than a simple attendee count. The team needs to see behavior in context, not just export a list of names.

Questions, Chat, and Polls Show What People Care About

Interactive moments are useful because they reveal what the audience is thinking while the topic is still fresh.

Questions often show where people need detail. Chat can reveal objections, use cases, or internal language. Polls can show where the audience is in the buying or implementation journey.

But these signals need careful interpretation. A high volume of chat messages does not automatically mean buying intent. A poll response does not mean someone is ready for sales. A question can come from curiosity, confusion, evaluation, or urgency.

The better approach is to treat interaction data as qualitative context for follow-up and future content.

For example:

- repeated questions about setup may suggest a need for implementation content

- questions about integrations may suggest a CRM or workflow concern

- questions about measurement may suggest the audience needs help proving value internally

- poll responses showing early-stage maturity may suggest a softer educational follow-up

This is why audience intelligence matters. The value is not just knowing that someone interacted. It is understanding what that interaction says alongside their registration, attendance, CTA, replay, and follow-up history.

CTA Clicks Show Action, Not Just Attention

CTA clicks are one of the clearest webinar engagement metrics because they show that someone moved from watching to acting.

That action might be booking a demo, downloading a guide, registering for the next session, joining a waitlist, starting a trial, or visiting a product page. The meaning depends on the CTA.

A demo CTA usually signals different intent from a checklist CTA. A replay CTA may mean something different from a live CTA. A click near the beginning of the webinar may mean the offer was obvious. A click after a specific section may mean that section created the moment of interest.

That is why CTA analytics should not stop at total clicks. The team should ask:

- which CTA did the viewer see?

- when did it appear?

- what topic or section came before it?

- did the viewer click during the live session or replay?

- did the click lead to a meaningful next step?

For practical examples of matching CTAs to the viewer's moment, see the guide to webinar CTA examples. The strongest webinar CTAs work because they fit the audience's context, not because they are simply more aggressive.

With conversion tools, the point is to connect the offer to the moment when intent appears. That makes CTA clicks more useful as an engagement signal.

Replay Behavior Shows Continued Interest

Replay analytics deserve more attention than they usually get.

A replay viewer is often engaging under different conditions from a live attendee. They may have missed the session but still care about the topic. They may be revisiting a section before sharing internally. They may have attended live and returned because a specific point mattered.

That makes replay behavior a useful second intent moment.

Track who watched the replay, how much they watched, whether they attended live first, whether they returned more than once, and whether they clicked a replay CTA. Those signals help you avoid treating every no-show or replay viewer the same way.

A registrant who never watches may need a short reason to revisit the topic. A replay viewer who watches deeply may need a more specific next step. A viewer who clicks a replay CTA should receive follow-up tied to that action, not a generic "thanks for watching" message.

Replay analytics are especially useful when connected to a broader webinar follow-up system. The replay should not sit outside the rest of the engagement record.

Follow-Up Actions Show Whether the Data Became Useful

The final layer of webinar analytics is what happens after the event.

This does not mean every webinar needs to be judged by a single revenue number. That can create messy attribution and overclaiming, especially when the sales cycle is longer or the webinar is educational.

But teams should still track whether webinar engagement led to useful next steps:

- replies to follow-up

- visits to the recommended resource

- demo or sales conversations

- trial or signup actions

- registrations for the next related event

- meaningful CRM activity tied to the audience record

These signals help close the loop. They show whether the webinar created useful movement after the live moment ended.

They also improve the next session. If one topic creates strong watch time but weak CTA action, the offer may need work. If one CTA earns clicks but little follow-up movement, the landing page or next step may be mismatched. If replay viewers engage more deeply than live attendees, the team may have a content packaging or timing opportunity.

Good webinar analytics do not end with the report. They feed the next decision.

How to Interpret Webinar Engagement Signals

Once the signals are collected, the useful work is interpretation.

A simple model can help:

| Pattern | What It May Suggest | Useful Next Step |

|---|---|---|

| High watch time and CTA click | Strong topic fit and clear next-step interest | Follow up around the clicked offer |

| High watch time, no CTA click | Interest without obvious action | Send a relevant resource or softer next step |

| Low watch time, later replay view | Topic interest, poor timing | Highlight the most useful replay section |

| Poll or question activity | Specific pain point or evaluation context | Follow up with content tied to the question |

| Registration only | Light interest or low urgency | Send a concise reason to watch or join the next session |

This model is intentionally simple. The goal is not to turn webinar analytics into a complicated scoring system. It is to stop treating every registrant, attendee, and replay viewer as if they behaved the same way.

That is where the buyer-intent value appears. Not in one metric, but in the pattern.

Turn Analytics Into Better Follow-Up

The most practical use of webinar analytics is follow-up.

A single generic post-webinar email is easy to send, but it wastes the signals the audience just gave you. A viewer who asked a detailed question, watched the full session, and clicked a CTA deserves a different message from someone who registered and never attended.

Follow-up can stay simple:

- send high-engagement viewers a specific next step

- send CTA clickers a message tied to the clicked offer

- send replay viewers a follow-up based on what they watched

- send low-engagement contacts a recap or softer educational resource

- use repeated questions to guide future content or sales enablement

This is the practical reason to connect webinar analytics with audience records and follow-up workflows. The data should help the team respond faster and more relevantly.

The Takeaway

Webinar analytics should do more than summarize what happened.

They should help your team understand who engaged, what they cared about, what action they took, and what should happen next.

Attendance, watch time, questions, polls, CTA clicks, replay behavior, and follow-up actions are all useful signals. But they become much more powerful when they are read together.

The best webinar engagement metrics do not just fill a report. They create a feedback loop: run the session, capture the signals, act on them, and make the next broadcast sharper.

That is how a webinar becomes more than a one-off event. It becomes part of a repeatable live growth engine.